For those who are following along at home…

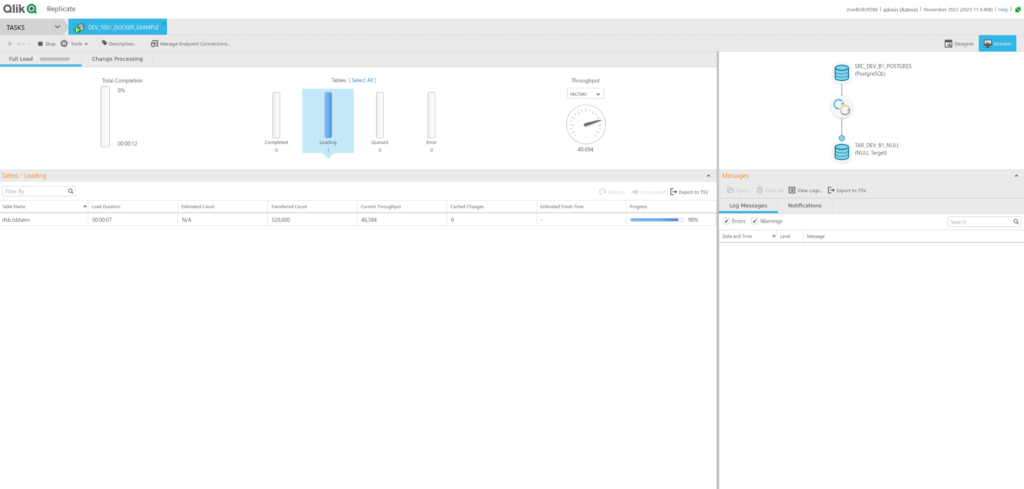

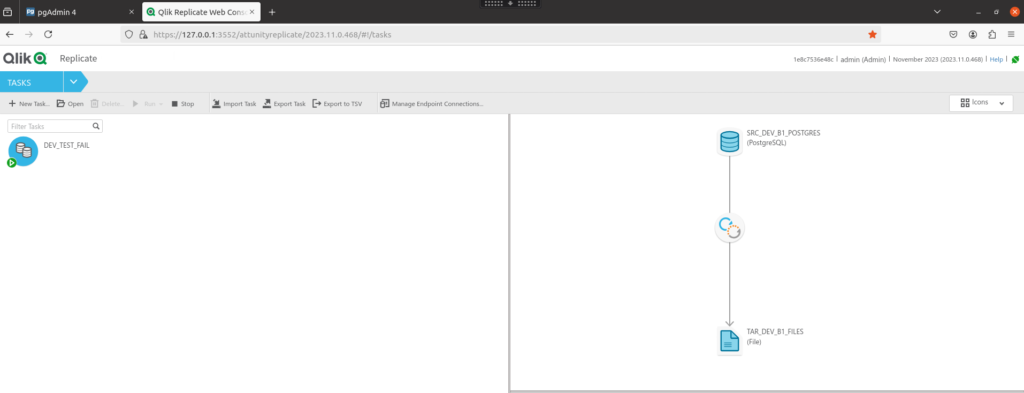

We have been toiling on replicating to AWS Postgres RDS with Qlik Replicate for the past two months; trying to achieve a baseline of 300tps.

After many suggestions, tuning and tweaks, conference calls, benchmarks learning experiences, prayers; we couldn’t get our tps close to our baseline.

You can read the main findings in the following pages:

Things that were suggested to us that I did not add to this blog series:

- Try Async commits on RDS Postgres. Tried it and got negligible increases.

- Shifting the QR server to AWS. Bad idea as there will be even more traffic from the busy DB2 database going across for QR to consume.

- Use Amazon Aurora instead of RDS. The downstream developers did not have the appetite to try Aurora; especially with the issues leaning towards network speed.

- Use GCP version of Postgres instead of AWS. The downstream developers did not want to commit to another cloud provider.

The problem is how the network connectivity behaves with the Postgres ODBC and the round trips it must do between our location and the AWS data centre. We can try – but we are bound by the laws of physics and the speed of light.

Decisions to be made.

All though our benchmarking and investigation; we have been replicating to a Development MS-SQL database in the data centre as the DB2 database in parallel to give us an idea of what speed we could potentially reach. Without triggers on the MS-SQL destination table; we were easily hitting 300tps. Ramping the changes up; we can hold at 1K tps with no creep in latency.

We were happy with these results; especially with the MS-SQL database was just a small underpowered shared Dev machine; not a full-blown dedicated server.

It took a brave solution architect to propose that we shift from AWS RDS Postgres to an on prem MS-SQL server; especially when our senior management strategy is to push everything to the cloud to reduce the number of on prem servers.

In the end with all our evidence on the performance and the project’s willingness to push on with the proposed solution, the solution stakeholders agreed to move the destination to an on prem database.

They initially wanted us to go with a on prem Postgres database; but since all our Database Administrators are either Oracle or MS-SQL experts and we have no Postgres experts – we went to good old MS-SQL.

It worked; but…damn triggers.

I volunteered to convert the Postgres SQL code into T-SQL as I have worked with T-SQL for the past decade. The conversion went smoothly, and I took the opportunity to optimise several sections of the code to make the solution more maintainable and to run faster.

With our new MS-SQL database all coded up and the triggers turned off; the SVT (stress and volume testing) ran at the TPS for which we were aiming.

But when we turned on the triggers; the performance absolutely crashed.

I was mortified – was it my coding shot and the additional changes that I made the performance worse?

I checked the triggers. I checked the primary keys and the joins. I checked the horizontal partitioning. I checked the database server stats for CPU and memory usage.

Nothing – could not locate the performance problem.

I went back to Qlik Replicate and examined the log files.

Ahh – here is something. The log file was full of entries like this:

00012472: 2024-05-27T16:03:54 [TARGET_APPLY ]W: Source changes that would have had no impact were not applied to the target database. Refer to the 'attrep_apply_exceptions' table for details (endpointshell.c:7632)

Looking inside the attrep_apply_exceptions there corresponding entries like:

UPDATE [dbo].[TEST_DESTINATION]

SET [ACCOUNT_ID] = 2,

[DATA_1] = 'Updated',

[DATA_2] = 'Data'

WHERE

[ACCOUNT_ID] = 2;

-- 0 rows affected

Which was confusing; I checked the destination table, and the update was applied. Why was this update deemed a failure and logged to the attrep_apply_exceptions table? It must be an error in the trigger.

The cause of the problem

Our code can be paraphrased like:

CREATE TABLE dbo.TEST_DESTINATION

(

ACCOUNT_ID int NOT NULL,

DATA_1 varchar(100) NULL,

DATA_2 varchar(100) NULL,

PRIMARY KEY CLUSTERED

(

ACCOUNT_ID ASC

)

);

GO

CREATE TABLE dbo.TEST_MERGE_TABLE

(

ACCOUNT_ID int NOT NULL,

DATA_1 varchar(100) NULL,

DATA_2 varchar(100) NULL,

DATA_3 varchar(100) NULL,

PRIMARY KEY CLUSTERED

(

ACCOUNT_ID ASC

)

)

GO

CREATE OR ALTER TRIGGER dbo.TR_TEST_DESTINATION__INSERT

ON dbo.TEST_DESTINATION

AFTER INSERT

AS

INSERT INTO dbo.TEST_MERGE_TABLE

SELECT

ACCOUNT_ID,

DATA_1,

DATA_2,

'TRIGGER INSERT' AS DATA_3

FROM INSERTED;

GO

CREATE OR ALTER TRIGGER [dbo].[TR_TEST_DESTINATION__UPDATE]

ON [dbo].[TEST_DESTINATION]

AFTER UPDATE

AS

UPDATE dbo.TEST_MERGE_TABLE

SET ACCOUNT_ID = X.ACCOUNT_ID,

DATA_1 = X.DATA_1,

DATA_2 = X.DATA_2,

DATA_3 = 'TRIGGER DATA'

FROM dbo.TEST_MERGE_TABLE T

JOIN INSERTED X

ON X.ACCOUNT_ID = T.ACCOUNT_ID

WHERE

1 = 0; -- This predicate can be either true or false. For example we set it false

GO

The problem is in how the trigger TR_TEST_DESTINATION__UPDATE behaves if it returns 0 rows. This can be a legitimate occurrence depending on a join in the trigger.

If I run a simple update like:

UPDATE dbo.TEST_DESTINATION

SET DATA_1 = 'Trigger Upate'

WHERE

ACCOUNT_ID = 1;

The SQL engine returns:

(0 row(s) affected) -- Returned from the trigger

(1 row(s) affected) -- Returned from updating dbo.TEST_DESTINATION

My theory is that Qlik Replicate when reading the rows returned from executing the SQL statement on the destination server; only considers the first row to determine if the change was a success or not. Since the first row is an output from the trigger with 0 rows affected; Qlik considers that the update was a failure and therefore logs it into the attrep_apply_exceptions table.

Apart from this been incorrect as the trigger code is logically working correctly; Qlik Replicate must make another trip to write to the exception table. This resulted in drastically increased latency.

Fixing the issue

The fix (once the problem is known) is relatively straight forward. Any rows returned needs to be supressed from the trigger. For example:

CREATE OR ALTER TRIGGER [dbo].[TR_TEST_DESTINATION__UPDATE]

ON [dbo].[TEST_DESTINATION]

AFTER UPDATE

AS

BEGIN

SET NOCOUNT ON; -- Supress returning the row count

UPDATE dbo.TEST_MERGE_TABLE

SET ACCOUNT_ID = X.ACCOUNT_ID,

DATA_1 = X.DATA_1,

DATA_2 = X.DATA_2,

DATA_3 = 'TRIGGER DATA'

FROM dbo.TEST_MERGE_TABLE T

JOIN INSERTED X

ON X.ACCOUNT_ID = T.ACCOUNT_ID

WHERE

1 = 0; -- This predicate can be either true or false

END

When the update statement is run again; the following is returned:

(1 row(s) affected)

Qlik Replicate will now consider the update as a success and not log it as an exception.